From Open to Fortress: OpenAI's Patent Record

What happens when the world's most valuable AI company tries to patent its way out of seven years of openness.

In 2015, OpenAI published a founding statement that read, in part: "Our goal is to advance digital intelligence in the way that is most likely to benefit humanity as a whole, unconstrained by a need to generate financial return." Elon Musk, who put up $38 million of the initial funding and claimed credit for choosing the name, later said on X that the "Open" was meant to signal open-source. The company would counterbalance Google, which had just acquired DeepMind, by doing its research in public.

Ten years later, OpenAI's reported valuation has climbed past $800 billion, according to a Reuters report on its early 2026 funding round. Its for-profit business now operates as OpenAI Group PBC, a public benefit corporation under nonprofit control. It is entangled in copyright lawsuits with the New York Times, among others. Musk, who left in 2018 and later sued the company for abandoning its original mission, has accused it of becoming "a closed source, maximum-profit company effectively controlled by Microsoft." The board crisis of late 2023, in which Sam Altman was fired and rehired in the span of five days, made international news for weeks.

All of these developments have been covered extensively. What has not been covered, at least not in any comprehensive way, is what OpenAI was doing in a federal filing system in Alexandria, Virginia, while the rest of the world was focused on the drama.

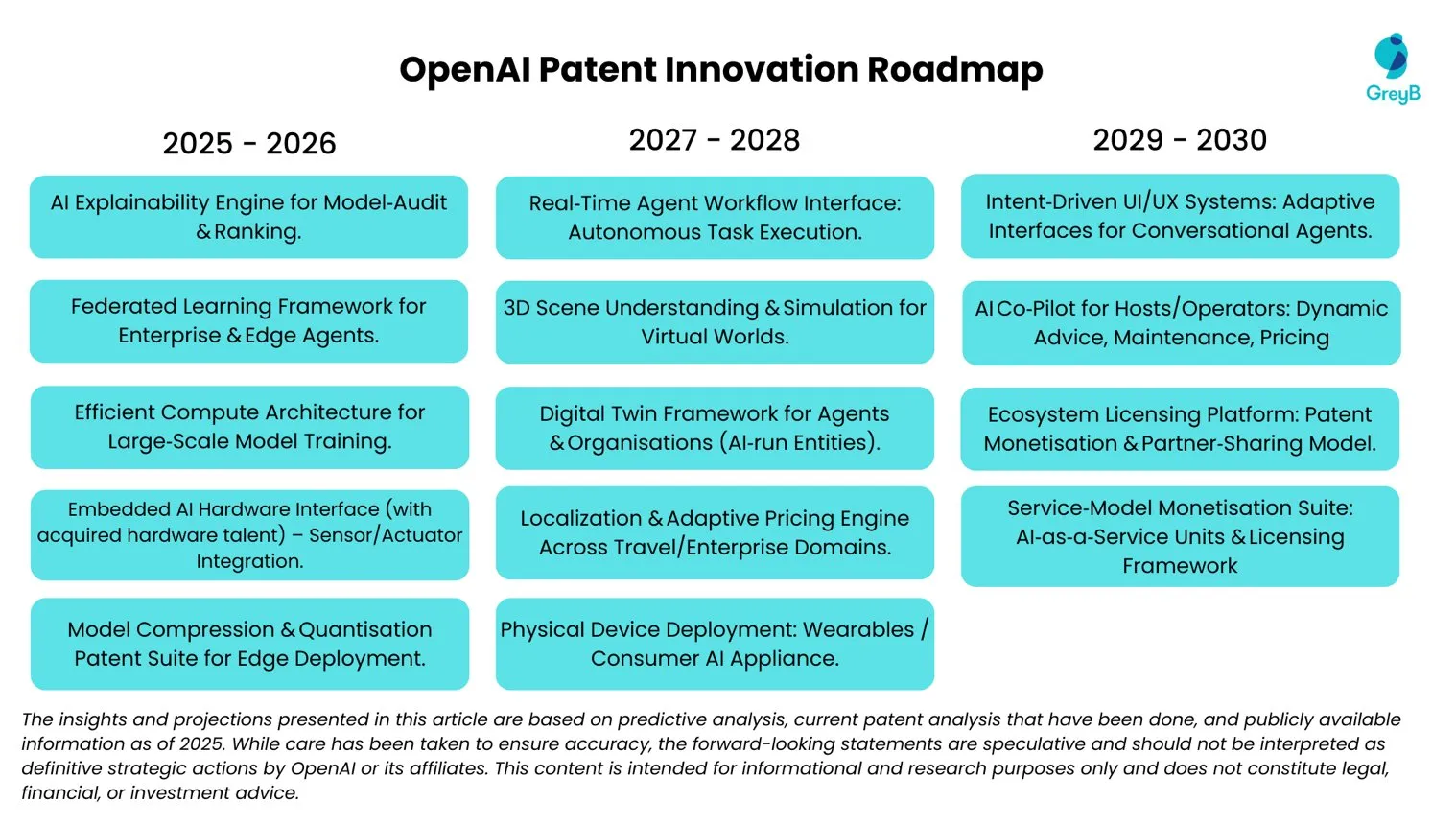

Between 2022 and 2025, third-party patent analytics firms estimate that OpenAI built a portfolio of roughly 110 patent assets globally across about 58 patent families, with approximately 42 grants (counts vary by methodology and date). The filings are public record; anyone can read them. Almost nobody does.

We did, and what we found adds a layer to the OpenAI story that the company's public narrative tends to skip over. OpenAI has been open about its transition from nonprofit to commercial enterprise. What the patent record reveals, however, is the cost of how that transition happened: an organization that treated intellectual property as irrelevant for seven years, realized the problem only after its biggest products were public, and then mounted a scramble to build protections that, in several specific and permanent ways, came too late.

The Seven-Year Void

Corporate milestones and patent filings, 2015-2025

Dec 2015

Feb 2018

Jul 2019

Nov 2022

Jan 2023

Mar 2023

Nov 2023

2025

The first seven years are empty. Then a sudden cluster starting mid-2022, growing denser through 2023 and 2024. The visual contrast between the void and the burst is the whole thesis in one image.

The Void

OpenAI's founding team included Musk, Altman, Greg Brockman (from Stripe), Ilya Sutskever (recruited from Google, where he'd studied under Geoffrey Hinton), and several researchers freshly out of top PhD programs. MIT Technology Review, in a profile published a few years after the founding, described the venture as "the AI moonshot, founded in the spirit of transparency." The nonprofit structure was itself a statement: important research institutions, the founders believed, should not be driven by shareholder returns.

That philosophy extended to intellectual property. No publicly visible OpenAI patent family appears before mid-2022; for the first seven years of the company's existence, the public patent record is empty. (It is possible that unpublished provisional applications were filed during this period, but none have surfaced in the public record, and no granted patent or published application claims priority from one.)

The context makes this remarkable. By 2022, incumbents like Google, Microsoft, and IBM each held thousands of AI-related patents, with portfolios built over a decade or more. WIPO's 2024 patent landscape report on generative AI flagged OpenAI's absence from the patent record specifically, calling it a strategic vulnerability.

But early OpenAI wasn't thinking in those terms. Patents require full public disclosure of how an invention works, in exchange for a twenty-year monopoly. If you're already publishing your research freely on arXiv and GitHub, you're giving up the disclosure for nothing in return. The monopoly is worthless if you don't intend to enforce it. So the IP strategy, such as it was, rested on trade secrets: keep the training data, the compute configurations, and the operational know-how locked inside the building, and let the science flow freely.

There is even a revealing exchange, published by OpenAI in 2024 as part of its legal dispute with Musk, in which Sutskever wrote to Musk:

"As we get closer to building AI, it will make sense to start being less open. The Open in openAI means that everyone should benefit from the fruits of AI after its built, but it's totally OK to not share the science."

Ilya Sutskever to Elon Musk, published by OpenAI in 2024Musk replied: "Yup."

The seed of the shift was there from the beginning. But for years, no one acted on it, because there was nothing commercial to protect. OpenAI was producing research, not revenue.

All this, however, changed in 2022.

The Trigger

The first patent filing in OpenAI's history carries a priority date of July 2022, four months before ChatGPT launched.

We can't reconstruct the internal conversation from public records, but the timing tells a story on its own. After seven years of treating the patent system as irrelevant, somebody decided otherwise at almost exactly the moment the company's most important product was about to ship.

ChatGPT launched on November 30, 2022, and within two months, it had a hundred million users, faster adoption than any consumer technology in history. Microsoft closed a $10 billion investment in January 2023. Altman told a Senate subcommittee in May 2023 that if the technology "goes wrong, it can go quite wrong," while simultaneously presiding over the fastest-growing consumer product the industry had ever seen.

The patent filings came in waves. An analysis by InQuartik, using its Patentcloud platform, found that OpenAI's filing activity spiked in March and April 2023, coinciding almost exactly with the launch of GPT-4 on March 14. A second wave hit in August and September. The pattern is consistent across the portfolio: OpenAI was filing patents on technologies that were already public and generating revenue.

This is the inverse of standard practice. The conventional approach is to file before you ship, sometimes years before, to establish priority and build a portfolio that makes potential competitors think twice before entering your space. What OpenAI was doing was more like retroactive fortification. They had released one of the most commercially successful products in the history of software with no patent protection whatsoever, and now they were trying to build legal walls around something the world was already using.

The urgency showed in the prosecution strategy, too. The average U.S. patent application takes about twenty-four months from filing to grant. OpenAI averaged roughly eleven. They achieved this using the USPTO's Track One program, which allows any applicant to pay a premium of a few thousand dollars per filing for prioritized examination. (That said, this is not unusual for well-funded companies; Apple, Google, and many venture-backed startups use Track One routinely.)

Time to Patent Grant

with Track One

What They Protected (And What They Left Exposed)

The portfolio that emerged is compact, which is expected for its age, and its shape tells you something about how OpenAI's leadership thinks about the relationship between the company's technology and its business model.

The 110 applications, spanning 58 patent families, cluster into five areas: the multimodal interfaces that let users interact with models across text, images, and audio; the code generation systems built on Codex; the text generation and editing workflows that power ChatGPT; the image generation architecture behind DALL-E; and the API layer that connects third-party applications to OpenAI's platform.

However, what's absent from the portfolio is what's worth paying attention to. The visible portfolio appears heavily weighted away from the general-purpose foundational techniques that power OpenAI's entire product line: transformer architecture, attention mechanisms, reinforcement learning from human feedback, the core training methodologies that are reusable building blocks across GPT, DALL-E, Whisper, and everything else the company ships. In other words, the portfolio does not contain the kind of broad foundational claims over core LLM techniques that could, in principle, block competitors from building rival systems altogether.

The Two-Layer Strategy

What gets patented vs. what stays secret

Requires full disclosure

Protects specific products

No disclosure required

Few if any visible patents

This seems to be a deliberate strategic choice, and it is the most sophisticated thing about the entire portfolio. Patents last twenty years but require public disclosure. For core product interfaces and API designs, which would be impossible to keep secret and have significant commercial relevancy, the trade-off is worth it.

However, for general-purpose foundational techniques, which evolve on a cycle of months, are reused across every product, and represent the company's deepest competitive advantage, the calculus goes the other way. Trade secrets don't expire and don't require disclosure. By the time a patent on a foundational training technique works its way through the USPTO, the technique may already be obsolete, and the disclosure requirement will have handed competitors a detailed roadmap for free.

So the company patented the product layer and kept the foundations as trade secrets. The practical consequence is that OpenAI's patents likely do not prevent anyone from building a competing large language model. You can train your own model, using your own architecture and data, and the OpenAI portfolio likely won't stop you. What it protects are implementations tied to specific products: the particular way ChatGPT handles text editing (U.S. Patent No. 11,983,488), the specific two-model pipeline DALL-E uses to go from text to image (U.S. Patent No. 11,922,550), the method by which external APIs plug into the platform (U.S. Patent No. 11,922,144).

The claims, up close

How broad are OpenAI's protections? It depends which patent you're looking at.

The text generation patent (11,983,488) contains an independent claim, Claim 10, that covers a method involving receiving a text prompt, receiving user instructions, accessing a language model, generating output, and editing the original text by replacing portions with the model's output. At a high level, that describes the basic workflow of almost every LLM-powered text editor on the market, not just ChatGPT. The IPKat, an intellectual property law blog, flagged the term "user instructions" as appearing six times in the claim without meaningful specificity. A European patent examiner, the blog's commenters noted, would almost certainly reject this claim for insufficient clarity. At the USPTO, it was granted.

The DALL-E patent (11,922,550) is a different story. Its independent claim requires a text encoder, a first sub-model that generates an image embedding from text input, and a second sub-model that produces a final image from that embedding. It is more technically precise than the text editing claim and harder for a competitor to design around, though a competitor using a different pipeline to get from text to image (a single-stage diffusion model, for instance) likely would not infringe it.

The Recentive problem

In April 2025, the Federal Circuit ruled in Recentive Analytics v. Fox Corp. that "claims that do no more than apply established methods of machine learning to a new data environment" are not eligible for patent protection under Section 101. In other words, iterative training and dynamic adjustment are "incident to the very nature of machine learning" and don't, on their own, make a claim patentable. To survive, an AI patent needs to show a genuine technical improvement to the machine learning itself, not just a new application of it.

Some of OpenAI's claims fit comfortably on the safe side of this line. The DALL-E patent describes a specific method, while the Whisper patent (U.S. Patent No. 12,079,587) covers a particular multi-task speech recognition architecture. These are the kinds of claims Recentive carved out room for.

Others are genuinely exposed. The text generation patent's Claim 10 describes a workflow that uses a language model for text editing without specifying any particular architecture, training method, or technical innovation in how the model operates. A defendant arguing that this merely applies generic LLM technology to a new task would likely have a credible case.

The Geography Problem

There is a structural gap in the portfolio that deserves close attention, though it is more nuanced than it first appears.

When the IPKat reviewed OpenAI's portfolio in August 2024, they noted that all published applications at that time were U.S. cases. Since the IPKat's review, some international filings have become visible. The code generation patent family (US12008341B2), for example, includes a PCT application, PCT/US2023/036505.

But the international coverage that is visible remains limited and late relative to the company's scale, and for several important patent families, the window for international protection has almost certainly closed.

For a company competing against DeepSeek in China, Mistral in France, and well-funded labs across Europe and Asia, limited international patent coverage is a significant strategic constraint. Most jurisdictions outside the U.S. require absolute novelty, meaning the invention cannot have been publicly disclosed anywhere before the filing date. The U.S. is unusual in offering a one-year grace period.

Consider DALL-E 2 as a concrete example. The research paper describing its architecture went live on arXiv on April 13, 2022. The patent application covering that architecture (US11922550B1) was filed on March 30, 2023, just inside the U.S. grace period. In Europe, where absolute novelty is required, the arXiv paper would constitute prior art. That particular invention is almost certainly unpatentable outside the United States, not because of a strategic decision, but because the paper was published before anyone filed.

This pattern likely applies to other filings in the portfolio where arXiv publication preceded the patent application. It is a direct legacy of years spent as a research-first organization, where publication came before any consideration of IP protection. For those specific inventions, the international filing windows have closed for good. For newer filings, the PCT applications now surfacing suggest OpenAI has begun addressing the gap, though the full extent of their international strategy is not yet publicly visible.

WIPO flagged OpenAI's limited patent footprint in its generative AI patent landscape report, describing it as a strategic vulnerability. From a practitioner's perspective, it is the kind of gap that grows more expensive as the company grows, because the value of underprotected implementations keeps increasing while the ability to retroactively protect them diminishes.

What This Means

Musk once told an audience that OpenAI was supposed to be "the opposite of Google," which he described as "a closed-source for-profit company." His argument, in the lawsuit and in public, is that OpenAI betrayed its founding openness by becoming too commercial, too closed, too much like the company it was built to counterbalance.

If that were true, you'd expect the patent record to reflect it: an aggressive, expansive IP portfolio designed to lock out competitors. It shows the opposite.

Google has over fifteen thousand AI patents providing global protection across dozens of jurisdictions. OpenAI has roughly 42 grants concentrated primarily in one country, covering only the outermost layer of its technology stack, with limited international filings only recently becoming visible.

The patent record doesn't support Musk's diagnosis; it suggests a different one: that OpenAI's problem isn't that it abandoned openness too aggressively, but that it held onto openness too long on the IP front and is still living with the consequences.

A note on the pledge

OpenAI maintains a patent pledge on its website, stating that it will use its patents "in a way that supports innovation" and will support others working on AI model technology, with a catch: the pledge does not apply to anyone who engages in "activities that harm us or our users."

An analysis published in the Oxford Journal of Intellectual Property Law & Practice examined the pledge through a formal legal framework and flagged that condition as extraordinarily broad. The phrase could encompass competitive behavior, critical commentary, or business decisions that reduce OpenAI's market share. The pledge contains no definition of "harm," no limiting principle, no temporal commitment, and could in principle be revoked at any time. Anyone making business decisions in reliance on it should read the actual text carefully and probably with counsel.

What Founders Can Take From This

OpenAI's research-first culture meant papers hit arXiv months or years before anyone filed patents. That killed their international filing options permanently. If your company publishes research, presents at conferences, or demos products publicly, you are starting a clock. In the U.S., you have twelve months from public disclosure to file. In Europe and most of Asia, you have zero. The USPTO filing fee for a provisional application is as low as $130 for small entities ($325 for large), but the real cost is the founder time and legal expertise needed to draft a disclosure that actually holds up. That's where services built for startup speed can make the difference.

Your IP strategy should be ahead of your business model, not behind it. OpenAI operated as a nonprofit for four years before creating a capped-profit subsidiary in 2019, and didn't file its first patent until 2022. By the time the IP strategy caught up to the business model, the most valuable filing windows had already closed. If you're a startup that might someday monetize your technology (and that's almost all of you), the time to think about IP is before the revenue arrives, not after.

Decide what gets patented and what stays secret. OpenAI's split between patenting product-specific implementations and keeping general-purpose foundational techniques as trade secrets is smart, and it's the one element of their strategy worth emulating directly. Not everything should be patented; innovations that are evolving fast, power multiple products, and can be kept secret may be better protected as trade secrets.

Think about geography from day one. Filing internationally is expensive, and not every startup needs global patent coverage. But if your competitors, customers, or potential business partners are outside the United States, protection concentrated in a single jurisdiction means you've disclosed your inventions to the world in exchange for limited legal rights. OpenAI appears to have begun addressing this with recent PCT filings, but for their earliest and most commercially significant inventions, the international windows had already closed. At minimum, understand what your options are before the absolute novelty deadlines pass.

Speed of grant matters less than timing of filing. OpenAI spent thousands of dollars per application on Track One expedited examination to get patents granted faster. That made sense for their situation, but the lesson for most founders is different: it's the filing date, not the grant date, that establishes your priority. A well-drafted provisional filed today, even at a fraction of law firm rates, gives you more strategic value than a $15,000 utility application filed next year.

The patent experience is broken - so we reinvented it.

End-to-end patent filing, built for startups that move fast.

Join the Waitlist ->All data from publicly available records: USPTO Patent Center, Google Patents (US11983488B1, US11922550B1, US11922144B1, US11886826B1, US12079587B2), WIPO's Patent Landscape Report on Generative AI, GreyB patent analytics, Originality.AI's OpenAI patent tracker, InQuartik's Patentcloud analysis, IPKat blog, Recentive Analytics v. Fox Corp. opinion (PDF), Oxford JIPLP pledge analysis, OpenAI's patent pledge, OpenAI's response to Musk lawsuit, OpenAI's founding blog post, Reuters on OpenAI $840B valuation, PCT/US2023/036505 (WO2024242700A1) on Google Patents

This article is for informational and educational purposes only and does not constitute legal advice. Patent law is complex and fact-specific; the analysis presented here is based on publicly available records and should not be relied upon as a substitute for consultation with a qualified patent attorney or agent.